SAP Data Intelligence Cloud has well-established capabilities for working with data contained within files, and a wide array of connection types available for target systems. However when you’re working with data in files that changes over time instead of a once-off extract, you’ll likely want to keep this data up to date in the target too. Let’s examine how we can use Data Intelligence Cloud to keep tables in Data Warehouse Cloud up to date as we change the contents of our file

Prerequisites

This blog post assumes that we’ve already set up a connection to a space within your Data Warehouse Cloud system.

Furthermore we’re going to need a table within the DWC Database User schema with a primary key defined. We’re going to walk this through below

Upserting

If the target database table has a primary key assigned, we can use UPSERTING to give us the behaviour we expect from the changed file. If an entry in our file has an entry with a matching primary key in the table, it will perform an UPDATE – if there’s no matching entry in the table it will INSERT the row instead.

For clarity, it’s important to note that any rows completely removed from the source file will not be removed from the target table.

Table Preparation

For our initial data load, we’re going to use a very simple schema. Copy the below contents and save it in a file called Employee.csv

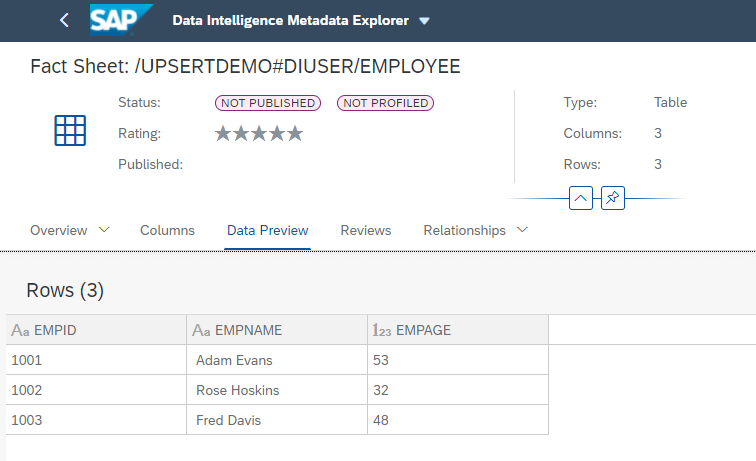

1001, Adam Evans, 53

1002, Rose Hoskins, 32

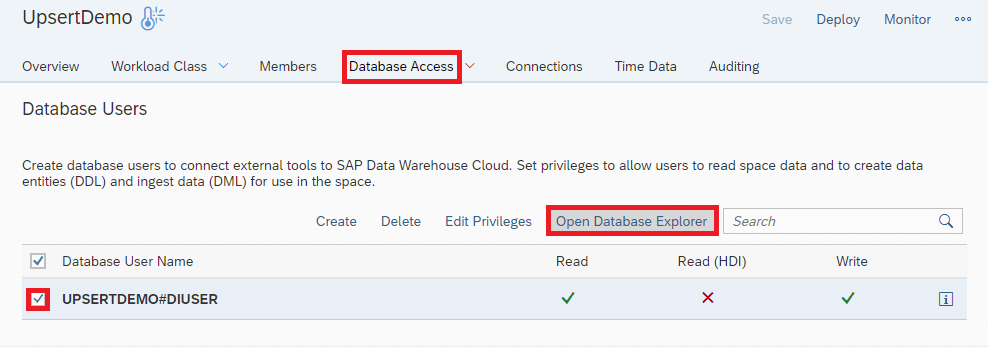

1003, Fred Davis, 48Within our Data Warehouse Cloud space, we want to navigate to the Database Access section. Here we find the Database User that was specified in our Data Intelligence Connection. Select this user on the left then click on Open Database Explorer

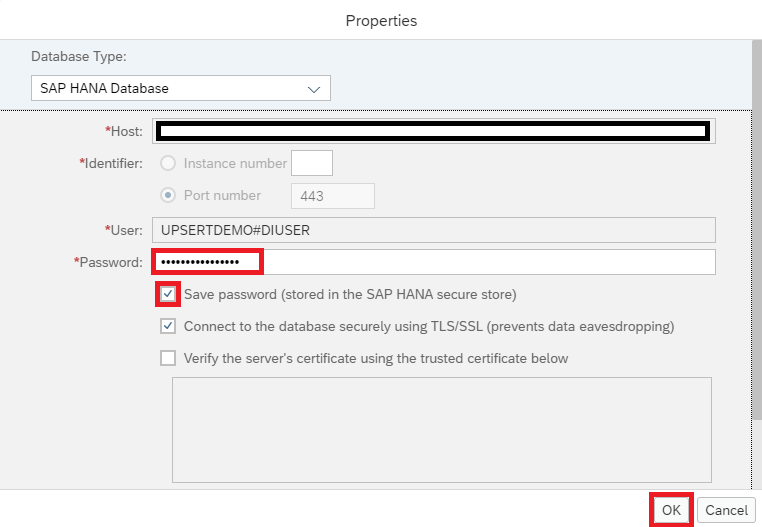

Once we reach the Database Explorer, we see a Database Connection Properties popup box. Here we want to enter the password for our Database User, and optionally save these credentials in the SAP HANA secure store before we click OK.

For the purposes of this blog post we’re going to directly create the table using the SQL Console. In a productive scenario you would probably want to define this table in a way that can be replicated and version controlled (perhaps using .hdbmigrationtable)

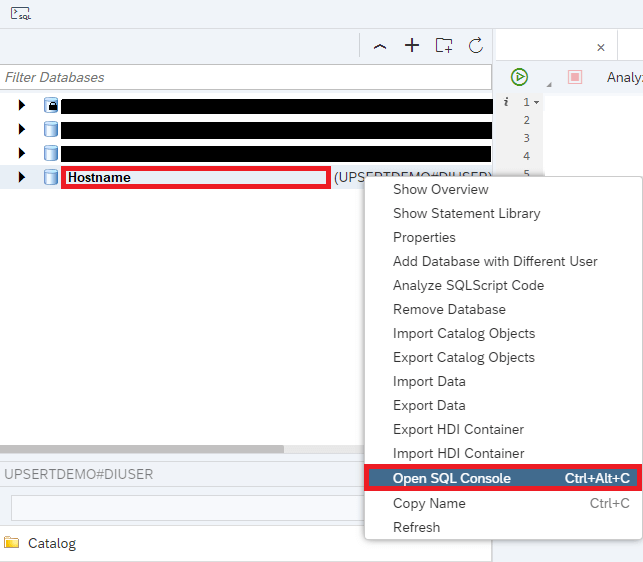

To open the SQL Console, we right click on the connection we just created, and then select Open SQL Console

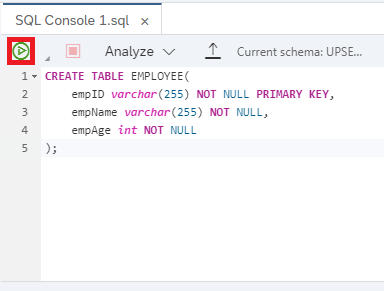

We want to use the below SQL Syntax inside the SQL Console to create our target table, specifying the Employee ID (empID) as the Primary Key

CREATE TABLE EMPLOYEE(

empID varchar(255) NOT NULL PRIMARY KEY,

empName varchar(255) NOT NULL,

empAge int NOT NULL

);Once the command has been copied into our console, we run it to create our target table

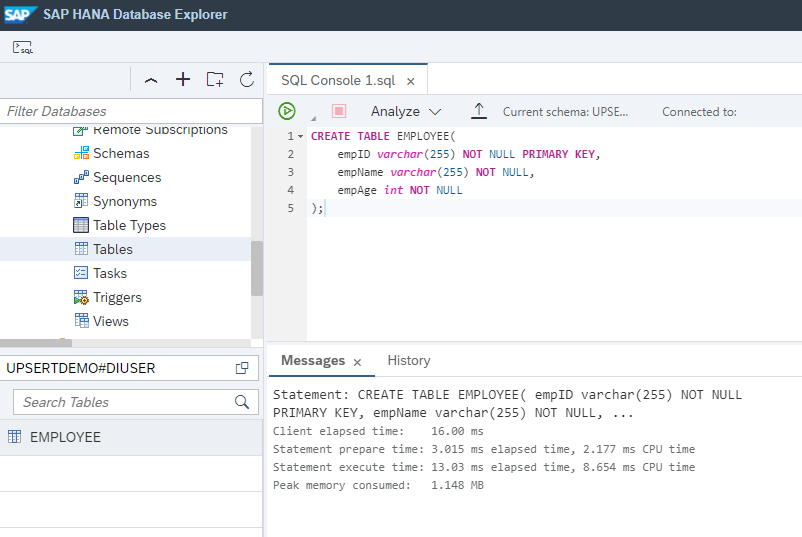

Once our table has been successfully created, we’ll see a message with some execution statistics. Additionally, if we want to verify it for ourselves we’ll find the table in the catalog on the left

Creating Our Pipeline

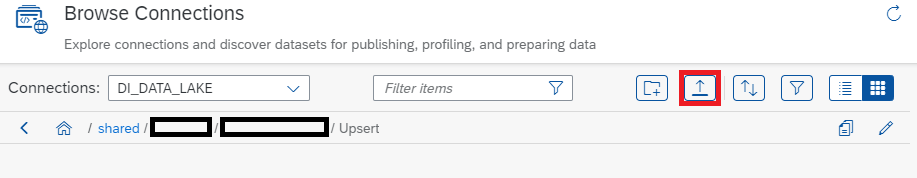

First things first, we’ll want to upload our Employee.csv file to one of our Data Intelligence Connections using the Metadata Explorer. For this blog post we’re using a folder within DI_DATA_LAKE, but this choice is arbitrary

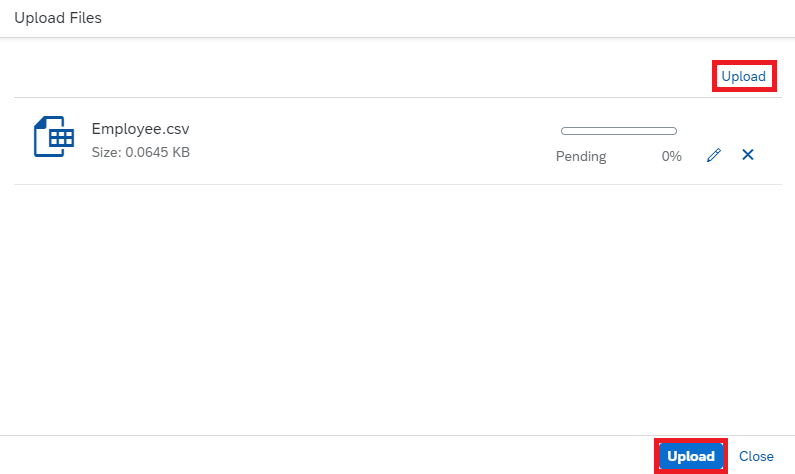

Within the Upload screen, click on Upload in the top right, select your Employee.csv file from your computer and then click on the Upload button on the bottom right

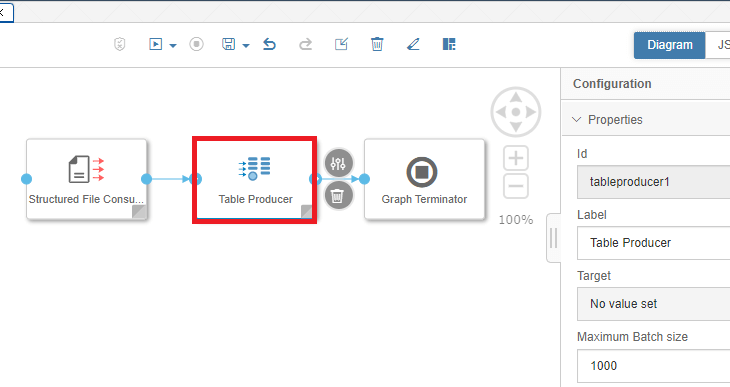

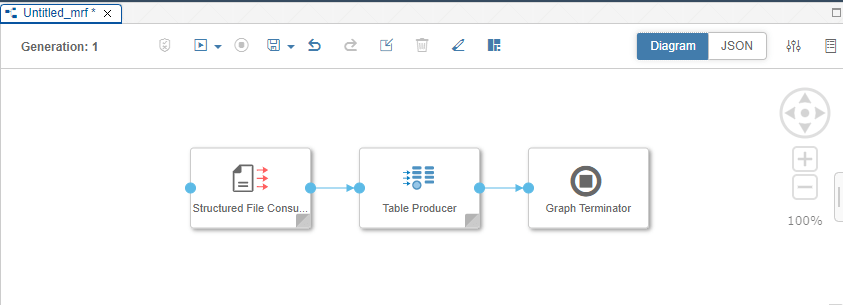

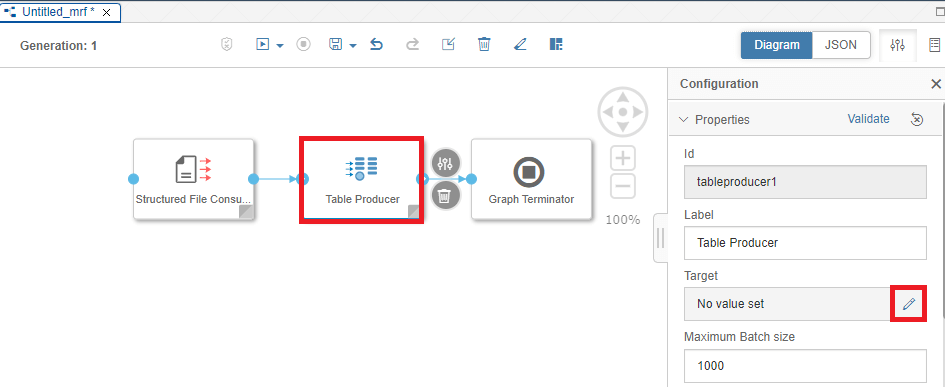

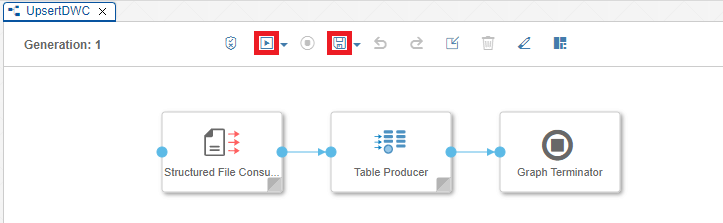

Once our file has uploaded, we can head to the Data Intelligence Modeler. Create a new Pipeline (Graph), then add and connect the following three Operators in sequence: Structured File Consumer->Table Producer->Graph Terminator

The Structured File Consumer will take our csv file from the DI_DATA_LAKE and read it in a form that can be processed. The Table Producer will then take this data and use it to UPSERT records into the table we just created. After this has completed, the Graph Terminator will end the processing of our Pipeline

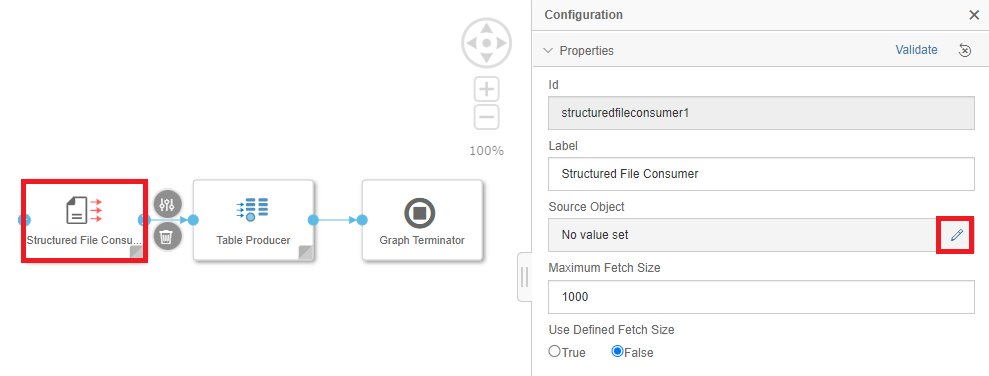

Let’s get our operators set up. First, select the Structured File Consumer, then edit the Source Object

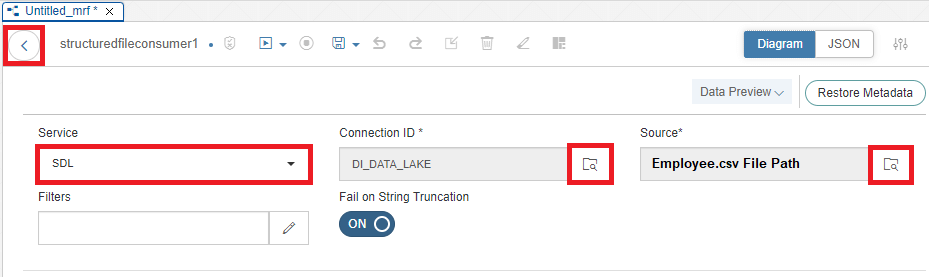

Select the SDL (Semantic Data Lake) Service, Connection ID (DI_DATA_LAKE) and the Source (File Path to our Employee.csv file) then click back

Next, we’re going to set up our Table Producer Operator

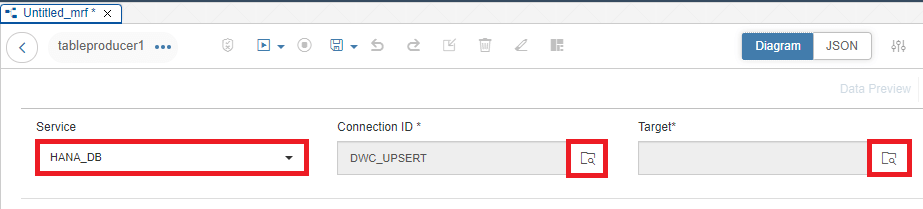

Set the Source to HANA_DB if it isn’t already set. Select our Connection ID (DWC_UPSERT), then click on the Target Box

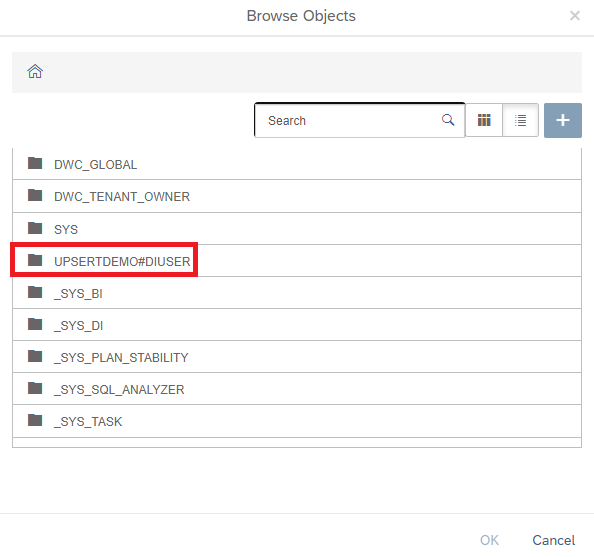

Select the Open SQL Schema that was created in Data Warehouse Cloud when creating the Database User (in this example, UPSERTDEMO#DIUSER)

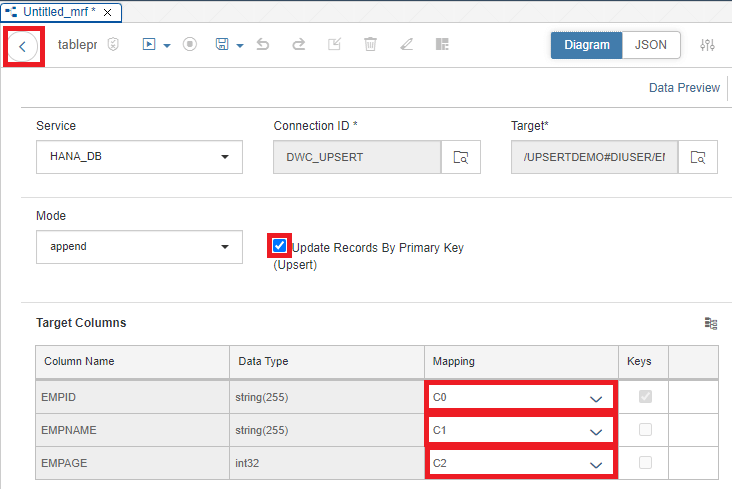

Select our Employee table, then click OK. Select the Upsert checkbox, and map our three table columns to C0, C1 & C2 in our Target Columns. Select the back arrow

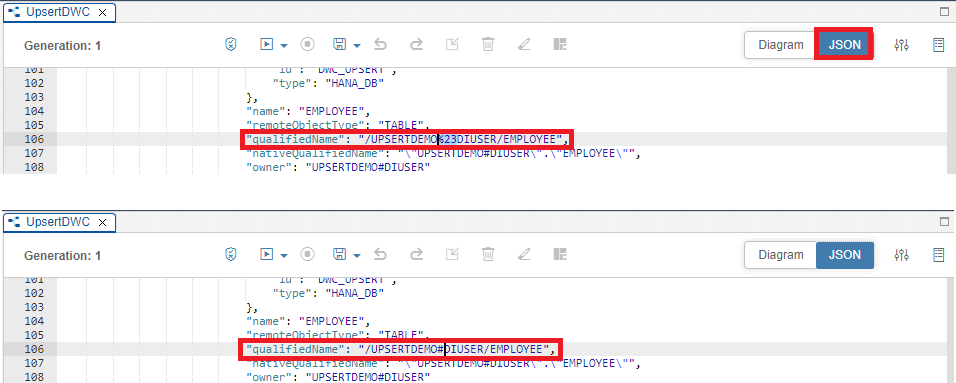

Save our new pipeline. Before we run our Pipeline, we’ll need to employ a quick workaround. When creating our Database User in DWC, the Schema is created with the hashtag character (#). However, in Data Intelligence the Table Consumer doesn’t allow the hashtag character in its targets.

Switch back to the Diagram view, then Save and Run the Pipeline

Success

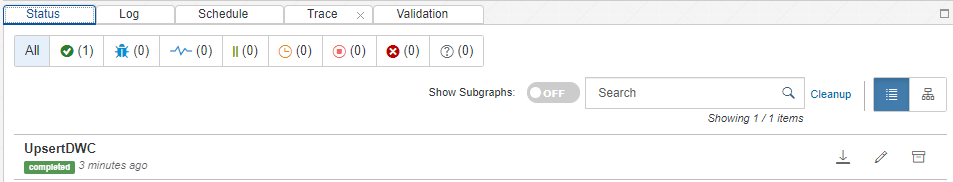

Once our Pipeline Run has been successfully completed, we’ll see it at the bottom of the Modeler

We can also verify the contents using the Data Intelligence Metadata Explorer