We can leverage the HANA cloud as source in Datasphere. Also HANA cloud in which Datasphere is hosted can be accessed for HDI developments.

Pre-requisite

- You have SAP BTP – Pay as you go account

- Create and add Datasphere instance in SAP BTP

- You have a space in Datasphere and have a Database user created with read/write access and enable HDI consumption

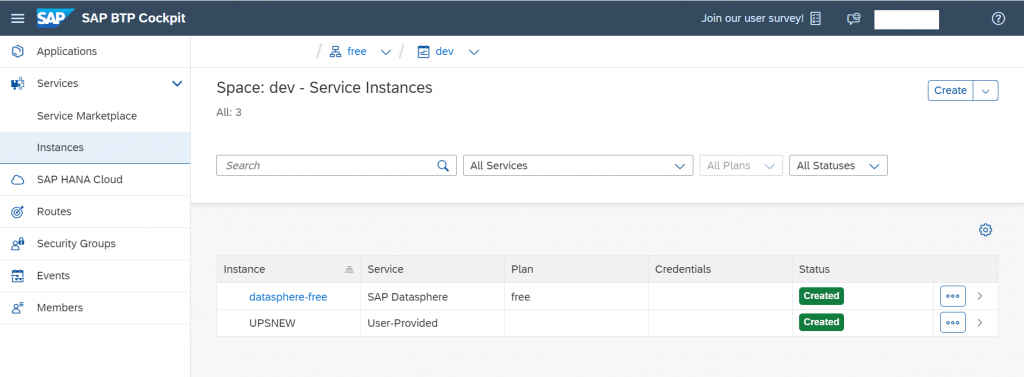

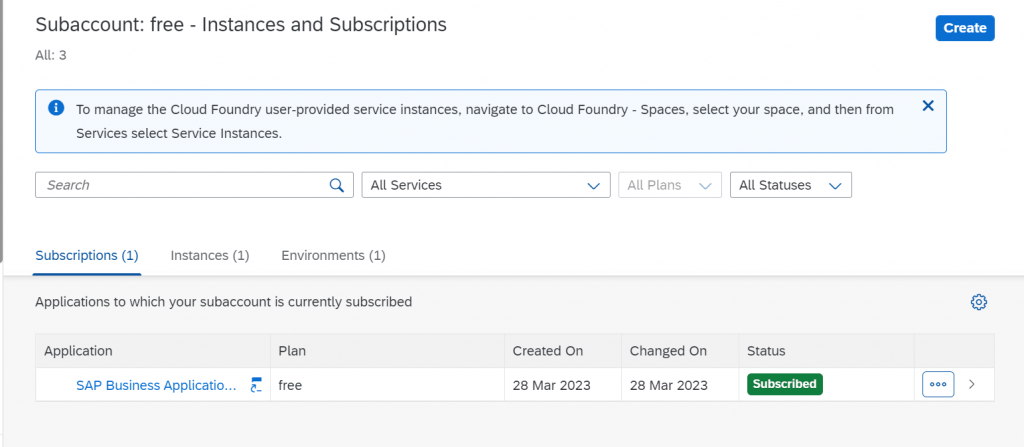

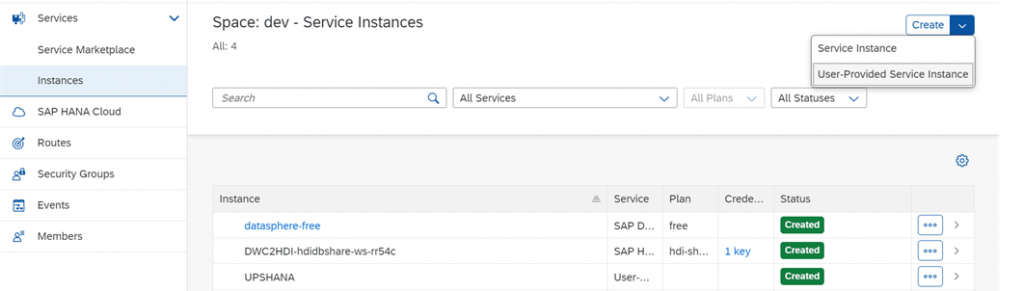

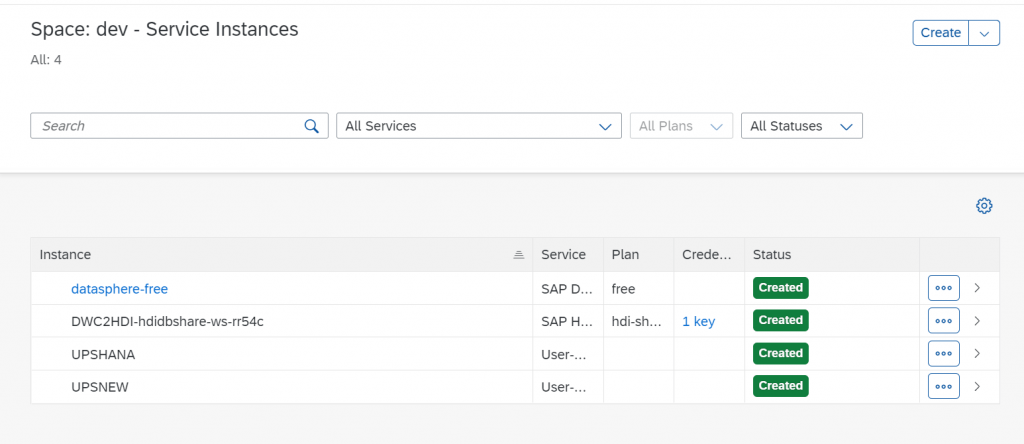

I have enabled the free datasphere subscription as of now. Datasphere instance is running in space “dev” under subaccount “free” in Business Technology Platform (BTP)

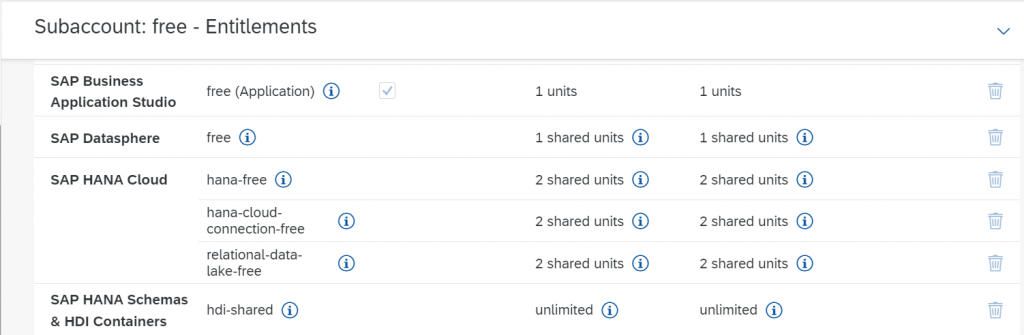

If you have subscribed for free plan, subscribe for Business Application Studio and also make sure HANA Schemas and HDI container is part of service plan

Mapping Datasphere and BTP space

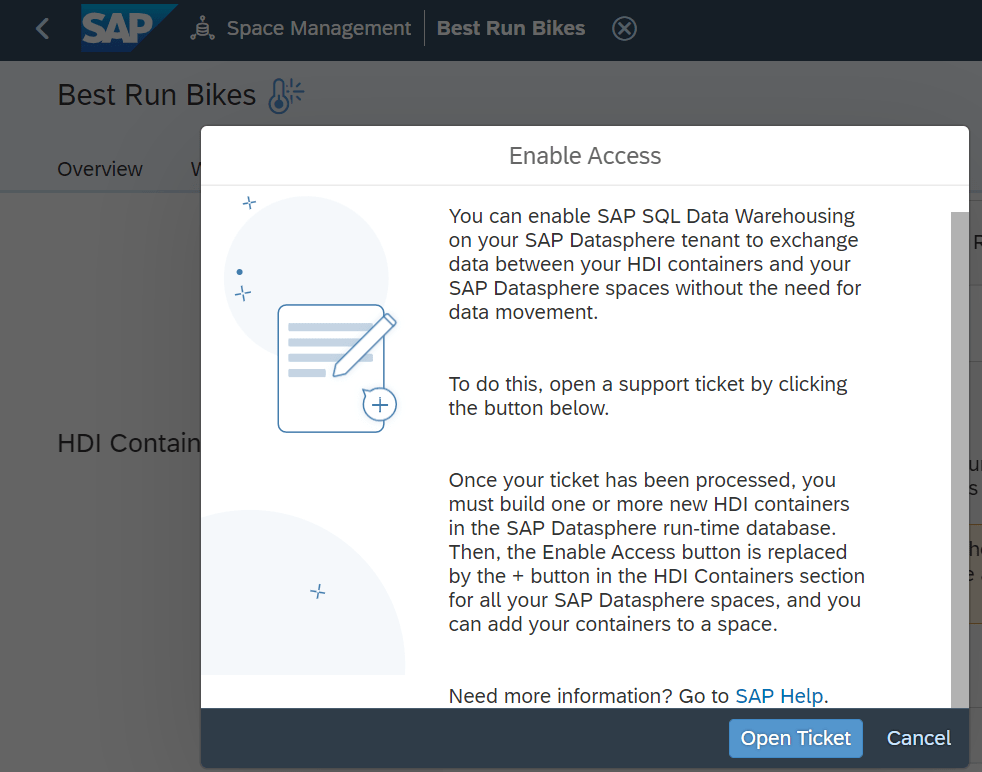

In Datasphere, navigate to HDI container section in space management. Raise SAP ticket, mentioning below details

- BTP Org Id – <<Fetch from overview page of BTP subaccount section>>

- Space Id – <<After logging into Space, get space id from the URL>>

- Datasphere tenant Id – <<From Datasphere About section>>

This is to enable HDI container mapping in SAP Datasphere – mapping BTP space and the Datasphere space. I’ am using the “Best Run Bikes” space.

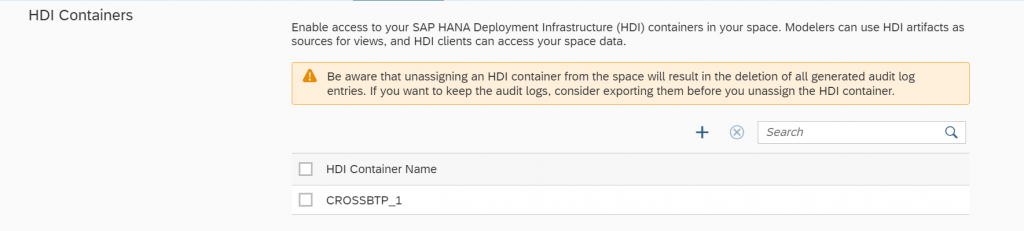

Once ticket is resolved ( after instance mapping is done by SAP) , we can add HDI container leveraging BAS, in HANA cloud on which Datasphere is hosted. Created HDI containers can then be invoked in Datasphere.

HDI Development in BAS

To enable the bi-directional access between Datasphere space and HDI container, below steps are to be followed. This would enable to access the objects in Datasphere in HDI container also access HDI containers in Datasphere space.

STEP 1 – Create User Provider Service in BTP space

- Log into BTP cockpit, navigate to the space where datasphere is running.

- Create a UPS in the space. Details to create UPS can be fetched from Datasphere space. In the Database users section within the space management, click on the information icon and copy the json file below the HDI Consumption section.

- Same credentials can be used to log into Open SQL Schema within space.

{

"user": "<<Database user name>>",

"password":,

"schema": "<<Space name>>",

"tags": [

"hana"

]

}

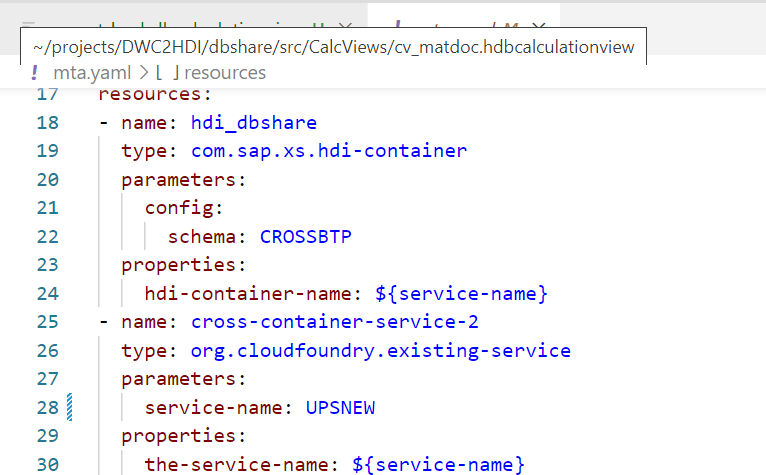

UPSNEW is the user provider service we will be leveraging

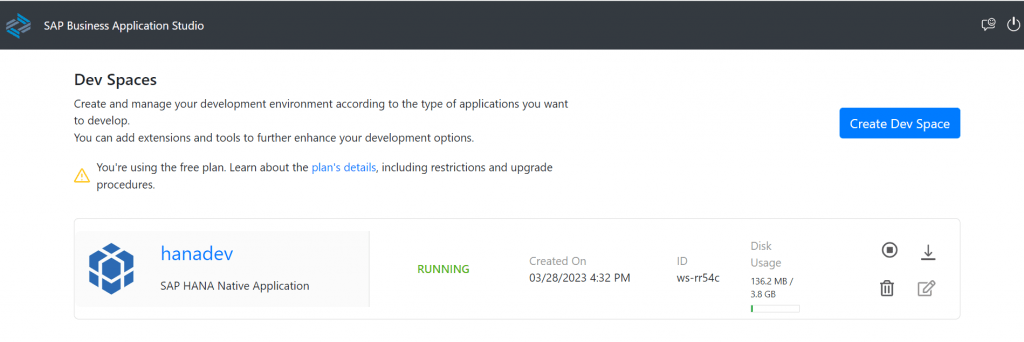

STEP 2 HDI Development in Business Application Studio

- Create a dev space in BAS and start the same. I referred project from git repository https://github.com/coolvivz/DWC2HDI which has all files and folders available to start development

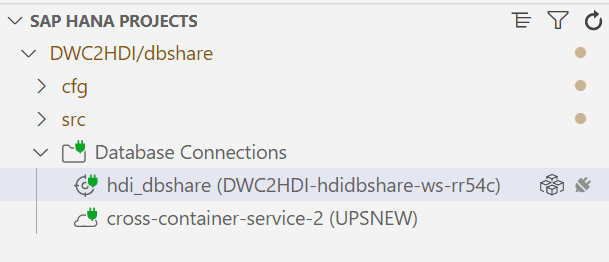

- Clone the project from GIT and adjust the same based on the UPS we have created

- Bind the cross-container-service-2 to the UPS created in previous step

- Bind the hdi_dbshare service to the default service instance

- All the default “Database User” user in Datasphere is created with “#” symbol. But VCAP_SERVICES environment variable does not recognize “#” symbol. So create a variable user and use the parameter in the environment variable in the .env file

USER="<<Datasphere Database User Name>>"

VCAP_SERVICES=towards the end, replace the database user name with above variable like this "user":"${USER1}"- Replace the UPS name in the hdbsynonymconfig and hdbgrants file

- Add synonyms for respective objects in Datasphere to be accessed in HDI container

- Now deploy cfg folder followed by src folder. HDI container name is CROSSBTP. If required, this schema name can be changed in the yaml file

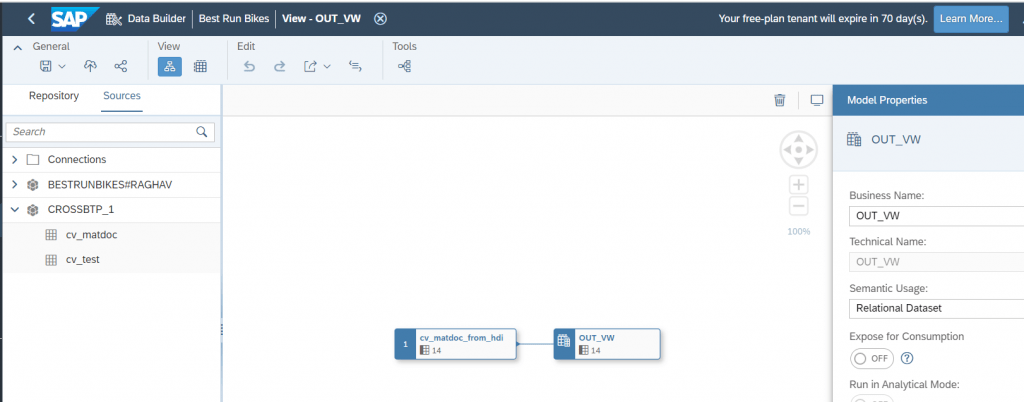

- Now in Datasphere, you will be able to see the HDI containers

- Leveraging the git repository, we can get “ready to use” project. We can then add required objects from Datasphere space as synonyms based on our requirement and build calculation views or procedures or flowgraphs.

- This can also be integrated with data from other sources. This integration by utilizing the underlying HANA cloud of Datasphere supports complex implementations

STEP 3 Objects in Datasphere that are exposed to HDI container

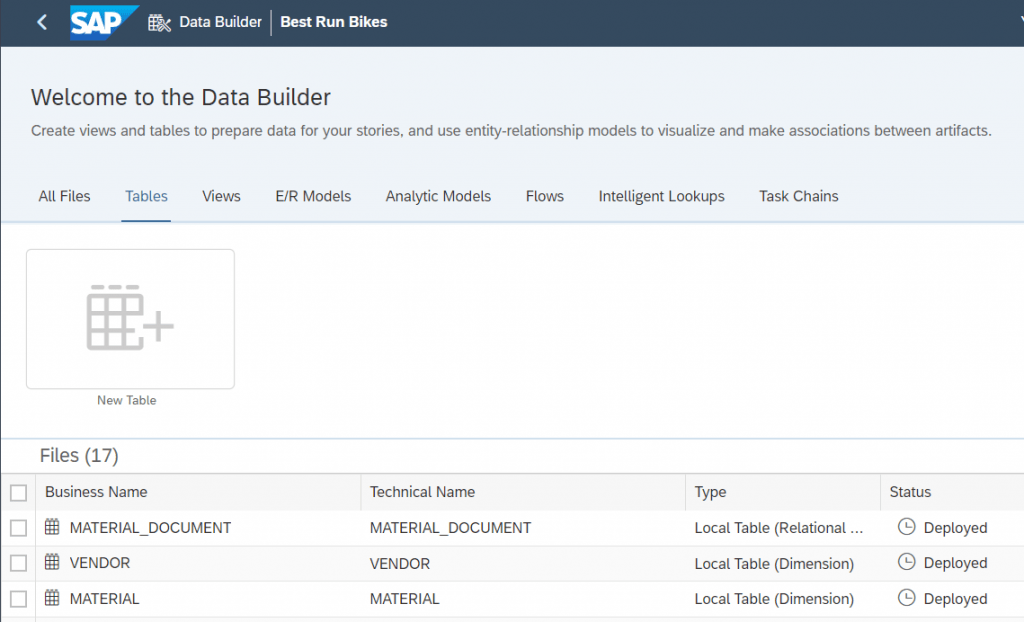

- I have mapped standard space “Best Run Bikes” with BTP space. Below tables are available in Datasphere space – Material , Vendor and Material Document

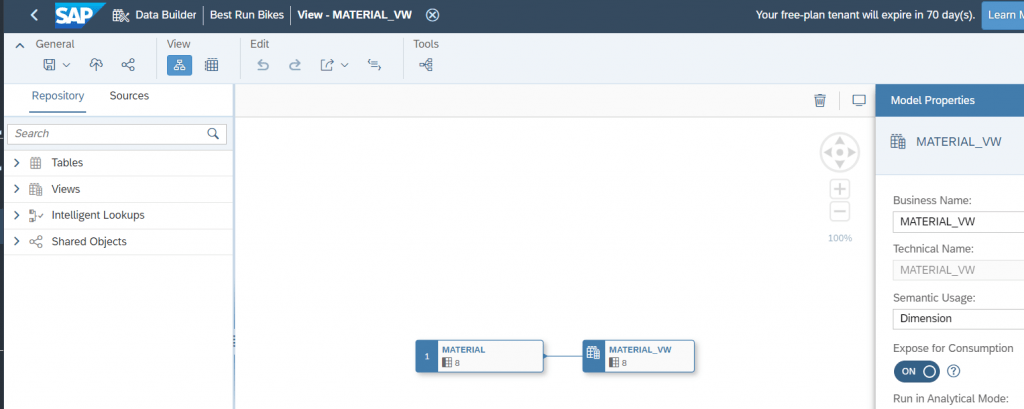

- To expose the tables in Open SQL schema and in turn leverage same in HDI development, one-to-one views are created and exposed for consumption

- In the project cloned from Git, below changes are required

- Add these views as synonyms

- Include them in the hdbgrants file

- default_access_role.hdbrole file should have entry for new views. “SELECT CDS METADATA” privilege is necessary to view columns of the view

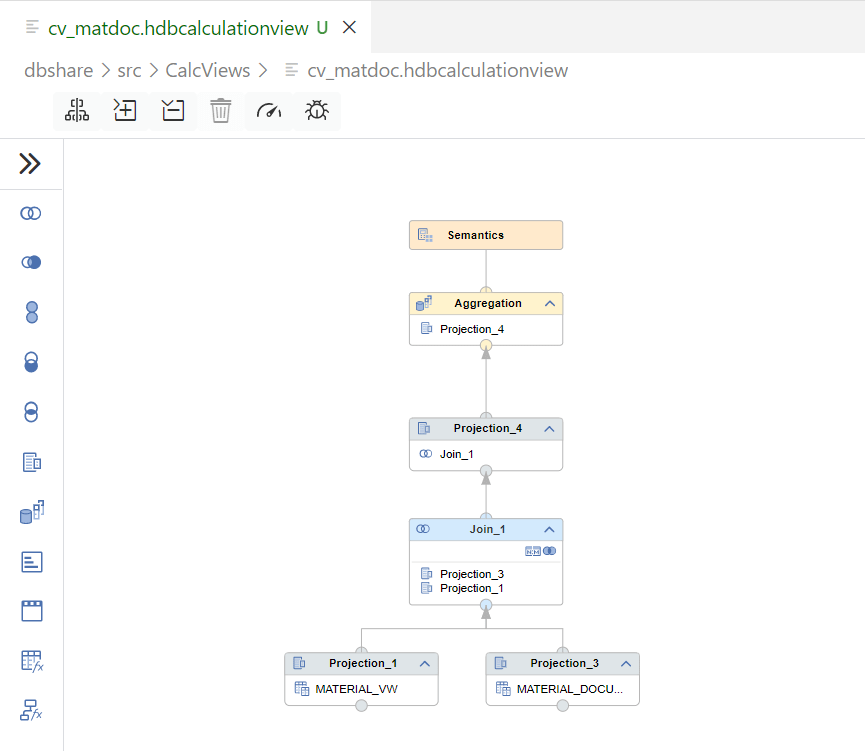

- Create calculation view or procedure leveraging objects from Datasphere. cv_matdoc is created in this case joining two views. This can also be integrated or blended with data from other sources.

- After deployment, newly added objects would be accessible from Datasphere. cv_matdoc calculation view can be accessed in Datasphere and further enriched and exposed for reporting.

- Below is screenshot from Datasphere “Best Run Bikes” space data builder leveraging the calculation view from CROSSBTP HDI container

Summary

We have now successfully leveraged the HANA cloud underneath Datasphere for HDI development. We can utilize Datasphere as a service in BTP , map Datasphere space with BTP space and this enables to perform complex developments in terms of blending different data sets in HDI containers. Same can be accessed back in Datasphere and exposed for reporting or even exposed as OData service for consumption.